Rishub Tamirisa

I’m currently working on scaling autonomous science at Intology.

I graduated from the University of Illinois at Urbana-Champaign. My prior research has focused on developing adversarially robust safeguards and alignment techniques for LLMs. My work has been accepted at ICLR, CVPR, NeurIPS, and ICML; also featured in Wired.

Previously, I was a founding engineer at Mindy Group,

a Sequoia-backed AI startup. I also co-led Lapis Labs,

a student-led research group, and worked at NASA.

News

| Nov 19th, 2025. | We announce Locus, which outperforms experts on RE-Bench. blog post. |

| Sep 18th, 2025. | Our work on Utility Engineering is accepted as a spotlight at NeurIPS 2025. |

| Sep 12th, 2025. | I'll be joining Intology to work on scaling autonomous AI research. |

| Feb 11th, 2025. | We release our work on Emergent Value Systems in AIs. |

| Jan 22nd, 2025. | Our work on Tamper-Resistant LLM Safeguards is accepted at ICLR 2025. |

| Aug 2nd, 2024. | Our work on Tamper-Resistant Safeguards is featured in Wired. article. |

| May 20th, 2024. | I'll be joining the Center for AI Safety as a Research Engineer Intern. |

| May 1st, 2024. | WMDP is accepted at ICML 2024. |

| March 5th, 2024. | WMDP is featured in TIME. article. |

| March 4th, 2024. | Our work on Robust Unlearning is accepted at SeT LLM @ ICLR 2024. |

| February 26th, 2024. | FedSelect is accepted at CVPR 2024. |

| February 13th, 2024. | Announcing our $6M seed round and the launch of Mindy. article. |

| October 12th, 2023. | I gave a talk internally at Google Research. post. |

| June 19th, 2023. | FedSelect is accepted at FL @ ICML 2023. |

Research

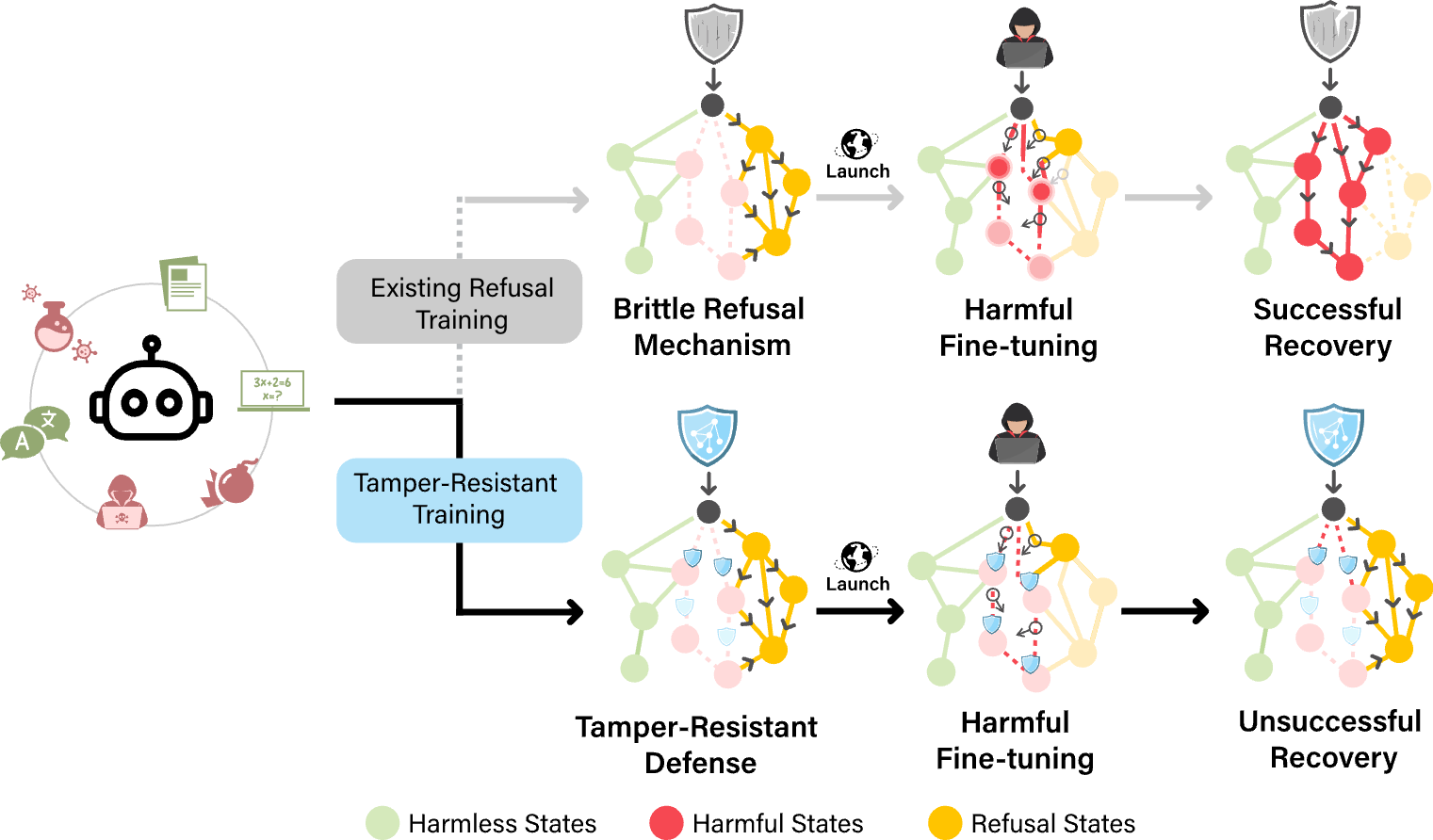

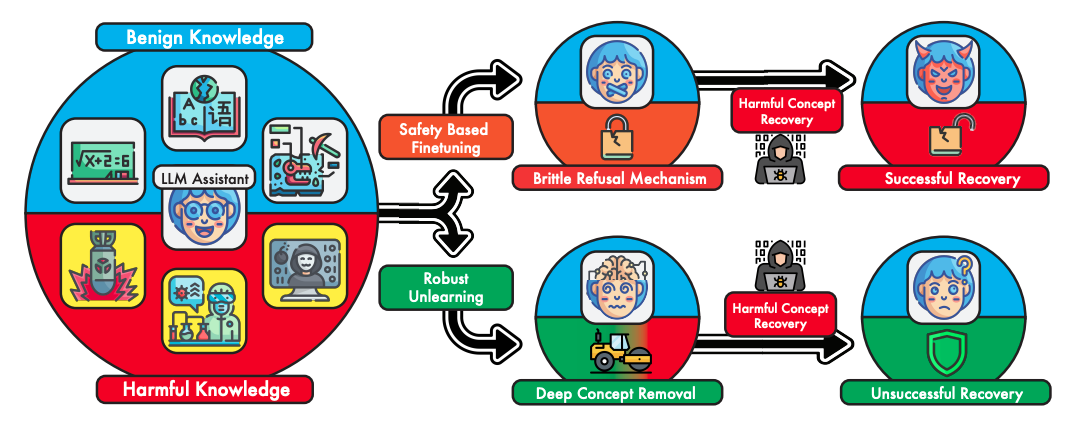

Toward Robust Unlearning for LLMs

Rishub Tamirisa*, Bhrugu Bharathi*, Andy Zhou, Bo Li, Mantas Mazeika

Secure and Trustworthy LLMs @ ICLR 2024 | paper

Toward Robust Unlearning for LLMs

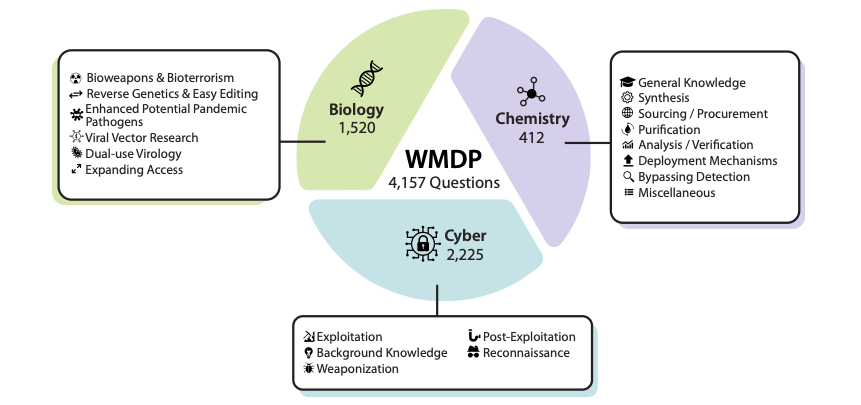

The WMDP Benchmark: Measuring and Reducing Malicious Use With Unlearning

Nathaniel Li*, Alexander Pan*, Anjali Gopal, Summer Yue, Daniel Berrios, Alice Gatti, Justin D. Li, Ann-Kathrin Dombrowski, Shashwat Goel, Long Phan, Gabriel Mukobi, Nathan Helm-Burger, Rassin Lababidi, Lennart Justen, Andrew B. Liu, Michael Chen, Isabelle Barrass, Oliver Zhang, Xiaoyuan Zhu, Rishub Tamirisa, Bhrugu Bharathi, Adam Khoja, Ariel Herbert-Voss, Cort B. Breuer, Andy Zou, Mantas Mazeika, Zifan Wang, Palash Oswal, Weiran Liu, Adam A. Hunt, Justin Tienken-Harder, Kevin Y. Shih, Kemper Talley, John Guan, Russell Kaplan, Ian Steneker, David Campbell, Brad Jokubaitis, Alex Levinson, Jean Wang, William Qian, Kallol Krishna Karmakar, Steven Basart, Stephen Fitz, Mindy Levine, Ponnurangam Kumaraguru, Uday Tupakula, Vijay Varadharajan, Yan Shoshitaishvili, Jimmy Ba, Kevin M. Esvelt, Alexandr Wang, Dan Hendrycks

ICML 2024 | arxiv | Time | blog | site

The WMDP Benchmark: Measuring and Reducing Malicious Use With Unlearning

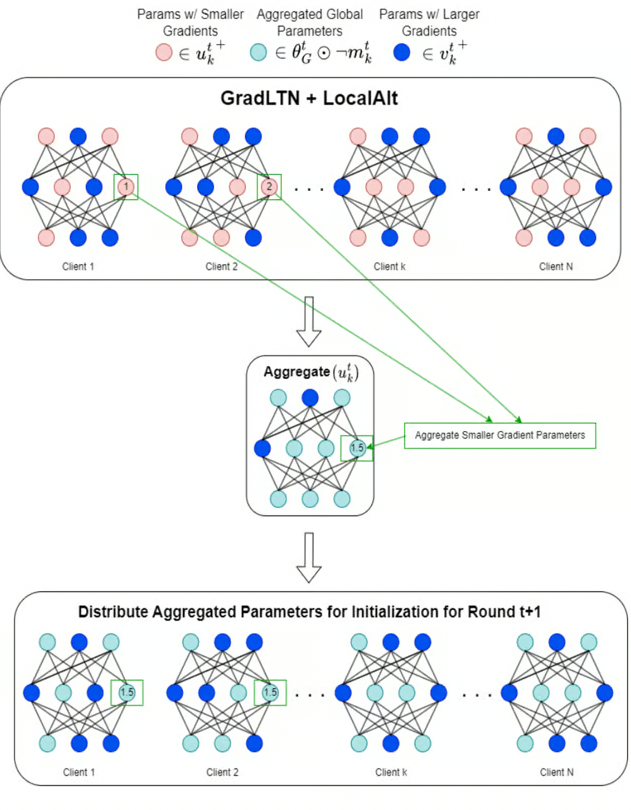

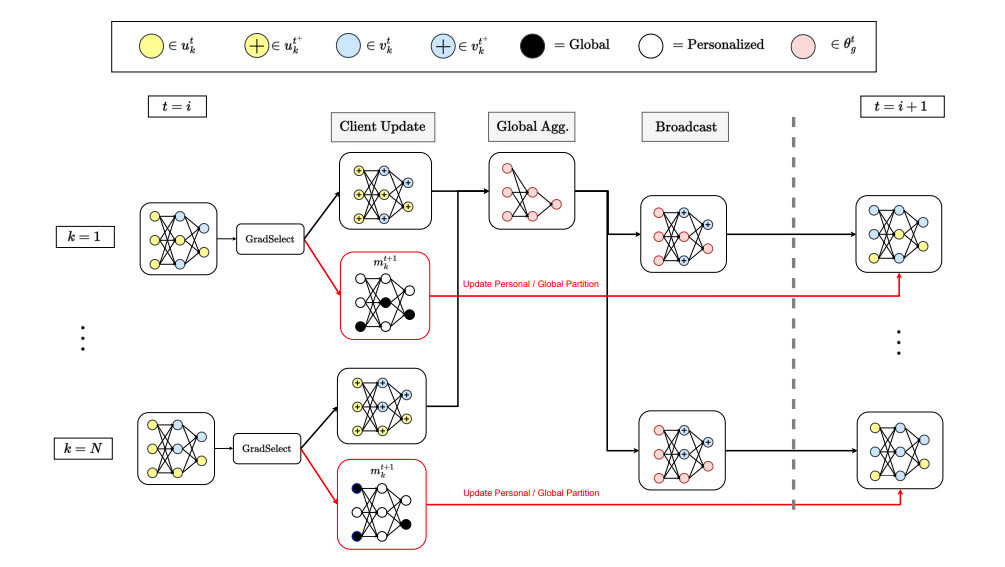

FedSelect: Customized Selection of Parameters for Fine-Tuning during Personalized Federated Learning

Rishub Tamirisa, John Won, Chengjun Lu, Ron Arel, Andy Zhou

Federated Learning @ ICML 2023 | arxiv

FedSelect: Customized Selection of Parameters for Fine-Tuning during Personalized Federated Learning